Neural Networks & Deep Learning

Demystify the architecture behind modern AI — how neural networks process information and why depth matters.

Featured Video

— YouTube / 3Blue1BrownBut what is a Neural Network? — 3Blue1Brown

Module Content

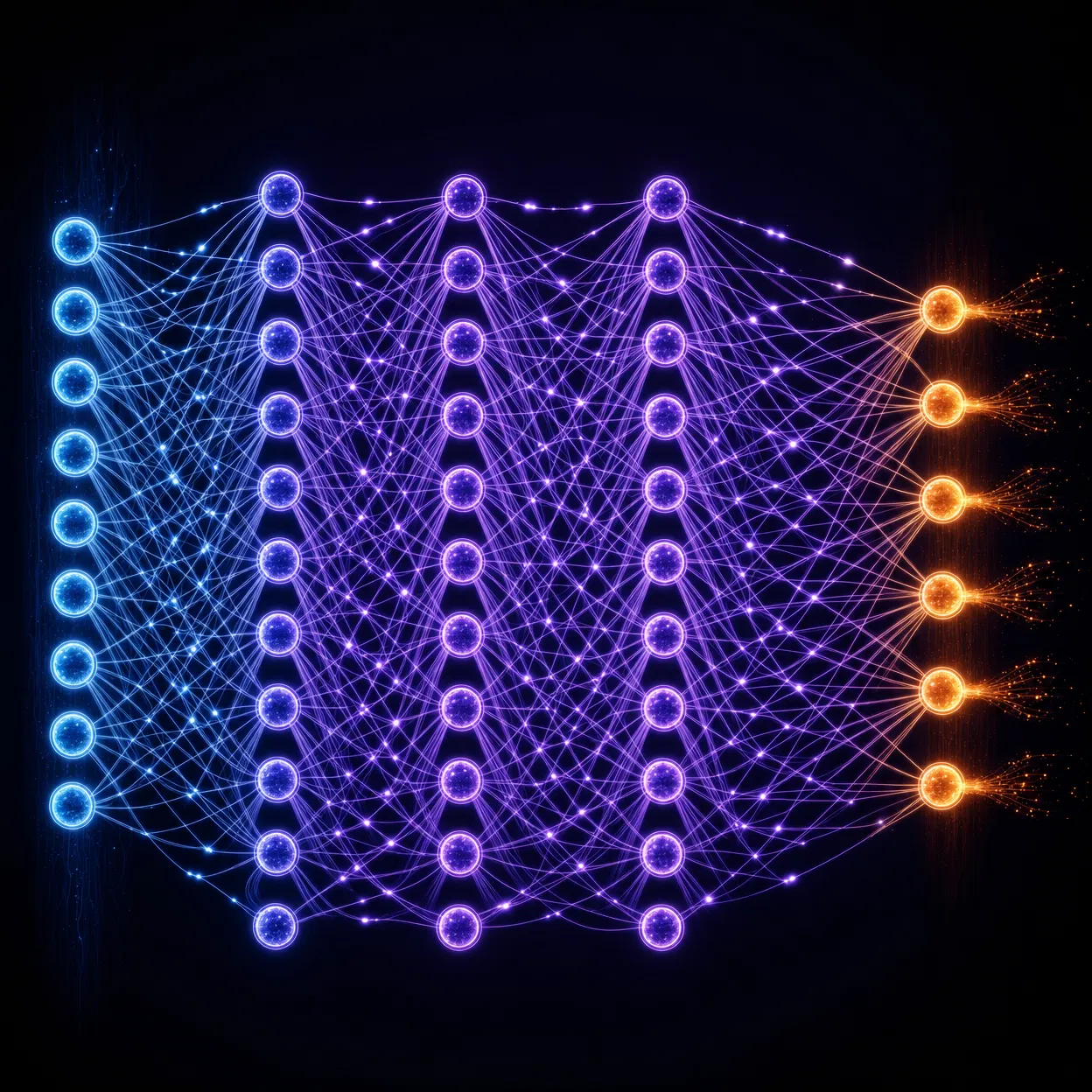

Neural networks are computational models loosely inspired by the human brain. They consist of layers of interconnected nodes (neurons) that process and transform data.

Architecture Basics

A neural network has three types of layers: an input layer that receives raw data, one or more hidden layers that extract features, and an output layer that produces the final prediction. The depth of a network — the number of hidden layers — is what makes it a "deep" learning model.

How Learning Happens

During training, the network makes predictions and compares them to correct answers. The difference (called loss) is used to adjust the connection weights through a process called backpropagation. Over thousands of iterations, the network gets better at its task.

Convolutional Neural Networks (CNNs)

CNNs are specialized for processing grid-like data such as images. They use convolutional filters to detect edges, textures, and shapes at multiple scales — enabling breakthroughs in image recognition and computer vision.

Transformers: The Architecture Behind LLMs

The Transformer architecture, introduced in 2017, revolutionized natural language processing. Its key innovation — the attention mechanism — allows the model to weigh the importance of different words in context. GPT, BERT, and virtually all modern language models are built on this foundation.

Real-World Example

How GPT Understands Language

GPT models use a Transformer architecture — a neural network that processes entire sequences of text simultaneously using 'attention mechanisms'. This allows the model to understand context across long passages, enabling coherent, nuanced text generation.

AI Tools in This Module

Key Takeaways

- Neural networks are inspired by biological neurons in the brain

- Deep learning uses many layers to extract hierarchical features

- Backpropagation adjusts weights to minimize prediction errors

- Transformers revolutionized NLP with attention mechanisms